Deploying a high-performance ML storage infrastructure

Amazon FSx for Lustre powers the most demanding HPC workloads, including ML training, AI, EDA, financial modeling, weather forecasting, and video rendering and transcoding

for tens of thousands of customers.

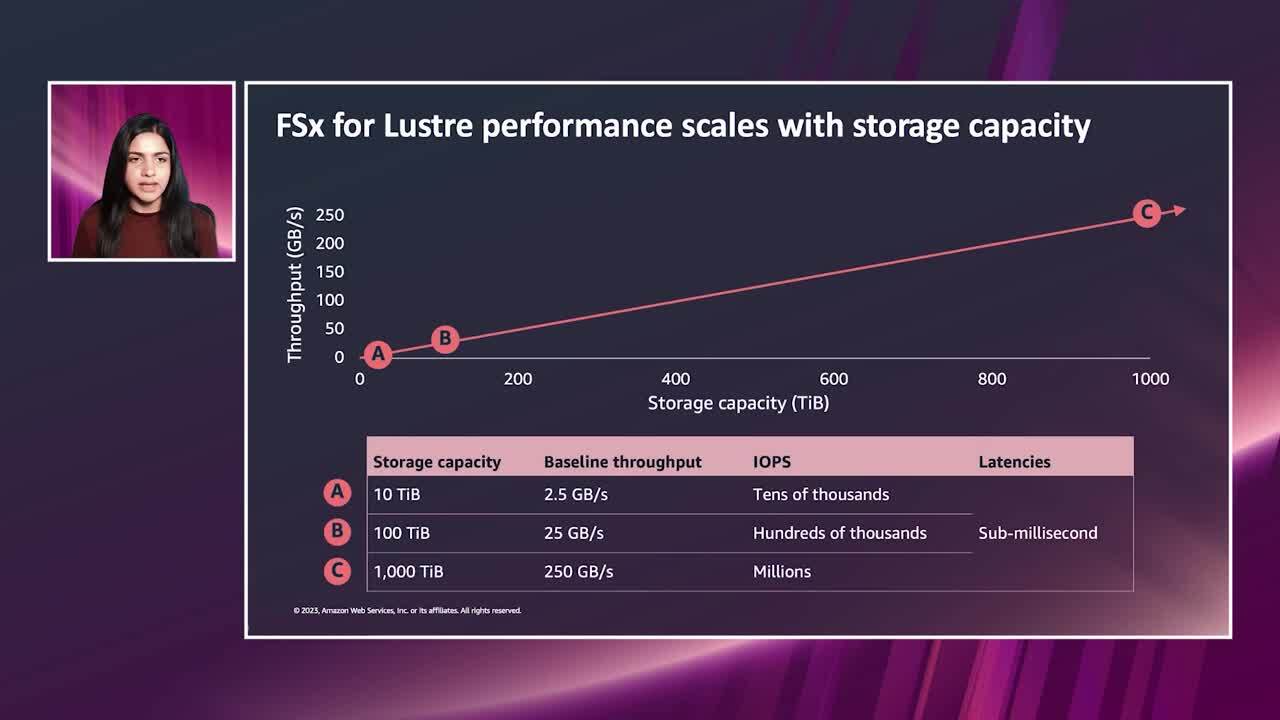

Enterprises of all sizes have adopted FSx for Lustre because it accelerates compute workloads with shared storage that provides sub-millisecond latencies, up to hundreds of GBs per second of throughput, and millions of IOPS.

In this session, we’ll discuss the benefits of a fully managed Lustre service that runs at scale and is easily configured with a few clicks of the management console. You will learn how to optimize Lustre price and performance with a range of deployment options, including storage type, performance tier, and replication level. We’ll show how customers are using FSx for Lustre to accelerate workloads and augment their compute during peak workload periods.